- AI is already impacting societies and enterprises in countless ways; enterprises are embracing and moving quickly to operationalize AI and autonomous agents–“the non-human human”

- Still, a number of adoption challenges exist including in the key area of GenAI Observability

- By understanding and addressing observability challenges in each layer of AI stack, enterprises can mitigate risk and move more quickly to successful AI

In a frequently-cited Wall Street Journal essay1, Marc Andreessen famously declared that "software is eating the world." Today, this insight seems equally apt for artificial intelligence. Artificial intelligence isn't a narrow add-on to the enterprise; it is fast becoming integral to the products and services offered by enterprises and to internal processes.

A truism of technology companies is that their core competency lies in disrupting the status quo with innovative technologies, turning industry-wide change into a competitive advantage. For enterprises experimenting with, adopting and deriving value from AI, the core questions are familiar:

- How do we minimize, or manage risk at scale and at speed?

- What is the shortest path to value that fits our organization?

AI is in its first wave of disruption. Most organizations did not budget initially for AI. Only a select few have formally trained their teams (coders, QA, users or business owners) on its use, risks or potential. And arguably, all are urgently pivoting to the world of AI as entire industries leap from proof-of-concept to operations. In short order, business owners and shareholders will be asking to see the return on investment.

“84% of C-suite executives believe they must leverage Artificial Intelligence (AI) to achieve their growth objectives”2,

“Only 11% of organizations have agents in production, despite 38% piloting them” 3.

As we move from the proof-of-concept phase to AI-in-operations, barriers to adoption persist. At the same time, expectations for a positive return on investment for AI are rising.

AI Adoption Challenges

In the report noted above from Deloitte, the analyst observes “enterprises attempting to wrap cutting-edge GenAI around broken, legacy processes instead of redesigning the process” as one of the challenges. Other enterprises have shared similar challenges.

- Escalating usage: Even before pricing models are fully understood by buyers and users, token consumption is skyrocketing and underlying AI resources in IT (applications, APIs, infrastructure and networks) are at capacity – working at limits ‘Mother Nature never intended’. Even user behavior is changing in ways that company policies may not have anticipated.

- Identifying and vetting AI use cases: At some enterprises, CXOs note that the quick pivot from pilot to operations means that use cases have not been fully vetted before eager teams begin adoption. As a result, projects may experience premature failure with the organization learning the wrong lessons from a well-intentioned effort.

- Organizational challenges: AI adoption transcends any particular tool and technology and so do the challenges. Other challenges involve the human aspects of collaboration, organizational behavior and organizational culture. Like any disruptive technology, AI stretches the bounds of or may entirely replace longstanding processes that are proven and fully battle-tested. AI adoption demands a high degree of collaboration across teams armed with data to debate and interpret so people can challenge assumptions and course-correct appropriately.

- Latency, cost overruns, inadequate risk controls etc: This is a broad topic that encompasses multiple disciplines like GenAI observability, governance, risk, etc. Technical teams should ask questions such as:

- What were the specific prompts entered by the user?

- Which applications were called?

- What data or content resources were utilized by the AI?

- What was the output delivered to the user in response to the prompt?

- How many input and/or output tokens were consumed?

Answers to these questions for AI will help organizations avoid pitfalls common to disruptive technologies. To maximize the ROI opportunity, leadership must take an holistic approach at each of the challenges including GenAI Observability across three critical pillars as explained below.

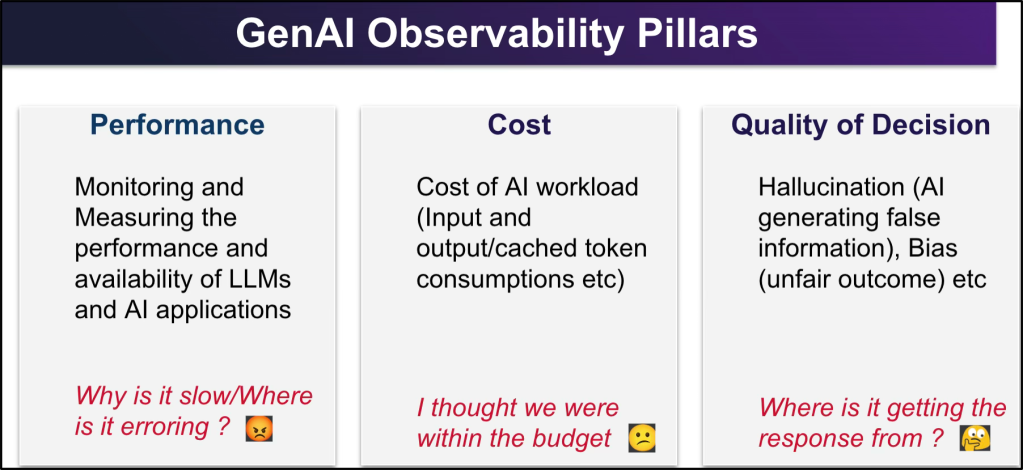

The Three Pillars of GenAI Observability

Observability is the ability to understand a system's internal state by understanding the data it produces on the outside. Given the complexities of GenAI and appreciating the rich IT operations data it generates, IT teams are well positioned to see these challenges before they impact the business or users.

1. Performance: From Latency to Operational Resonance

With the emergence of GenAI, monitoring and managing performance must extend beyond traditional measures of uptime and throughput. Teams need to understand where time is spent. With GenAI, when performance issues arise, more precise information is needed to determine if the culprit is the model inference, vector retrieval or somewhere in the orchestration layer. Even small amounts of latency over large scale AI can result in intolerable user experience and higher costs.

Conversely, if observability is an issue, teams may err on the side of over-provisioning for simple tasks, especially when they are moving quickly from one model version to another and have a low threshold for performance risk.

2. Cost: Managing the Token Economy

Organizations are rightfully sensitive to escalating costs, especially when the value of AI has not been established or fully monetized. When teams responsible for expense management rely on traditional usage reports, their understanding of costs will be perpetually outdated to the point where cost reporting becomes a liability.

The Leadership Mandate: For accurate, near-real time understanding of AI costs, teams need a robust observability framework that can track input/output tokens, cache hits, and vector database usage. This allows teams to understand usage patterns from a cost standpoint, improve responsiveness to changing situations and more confidently present budget requirements vis a vis investment opportunities. This is critically important for enterprises where AI commitment decisions are commonly made at a board or corporate director level while cost implications are felt at the IT or operations level. Tracking better AI measures helps to bridge this gap.

3. The Quality of Decision: Solving for Probability

Unlike traditional code, AI is probabilistic. To ensure accuracy and reduce hallucination and bias, IT teams must move beyond familiar and proven quality and performance management approaches to a new set of AI-centric best practices. These include:

- Reference Tracking: Grounding responses in external data to eliminate bias.

- Call Path Tracing: Differentiating "good" execution paths from "bad" ones to refine agent behavior.

- Intermediate Query Tracking: Important not only from optimization, performance profiling but also from audit and governance perspective.

- Feedback Loops: Quantifying user interactions to determine a confidence score of each AI response.

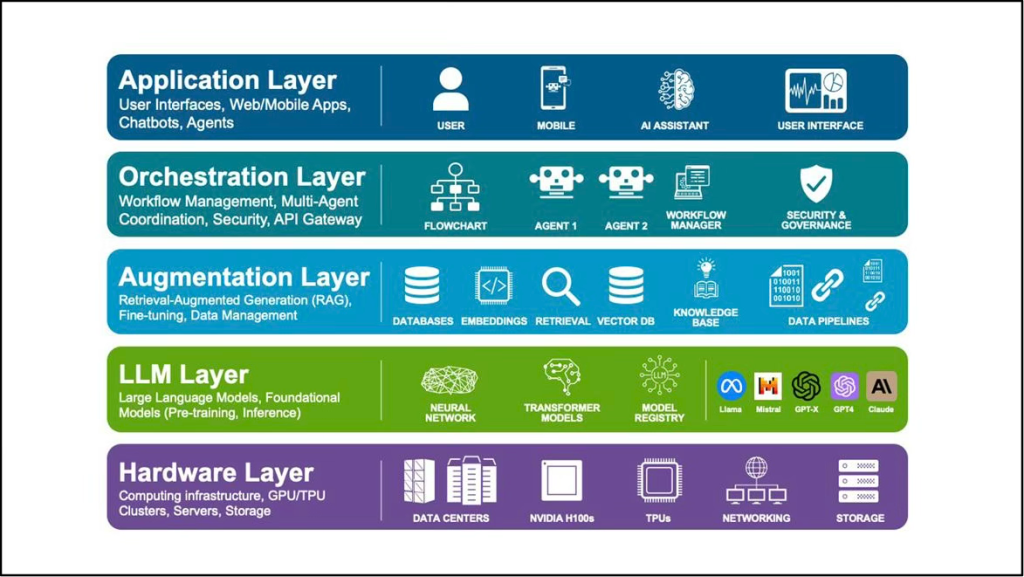

The AI Full Stack Architecture: A Strategic View

To gather data for performance, cost and quality measures for AI and especially GenAI, IT teams need a deep understanding of all the five layers of the modern AI stack. While this may seem like a near-insurmountable requirement, IT operations teams, using modern AIOps and observability technologies can readily obtain the data they need.

Stated in another way, the challenge IT teams face is to stitch together the full scope of AI-related data in a way that provides them with insights to efficiently manage AI infrastructures that meet expectations regarding performance, cost and quality of decisions. The table below outlines, on a high level, the types of data that can further the AI insights they need.

| Architecture Layer | Strategic Focus | What to Observe |

| Hardware | Foundation & Compute | GPU/TPU utilization and network throughput. |

| LLM (The Brain) | Core Intelligence | Latency profiles, version comparisons, and token consumption. |

| Augmentation | Domain Expertise | Vector DB accuracy scores and RAG (Retrieval-Augmented Generation) performance. |

| Orchestration | Execution & Agency | Agent identity, security protocols, and autonomous decision paths. |

| Application | User Experience | End-user latency and 3rd-party library dependencies. |

The Path Forward

The race to successful AI will not necessarily be won by enterprises with the largest AI models or greatest investments. To successfully manage themselves through the disruptive transitions brought about by AI and to obtain their desired results, enterprises should elevate the role of IT operations and make superior use of AI-related observability data. These organizations will be armed with information to:

- Make smart decisions balancing risks for performance, costs and quality of decision as they transition from pilot to operations

- Avoid learning the wrong lessons from premature failure of AI initiatives

- Bridge the vision established by boardrooms and corporate directors with ‘feet-on-the-street’ understanding from IT

- Move confidently to value realization for AI initiatives.

By looking at AI holistically and addressing challenges noted above to move AI observability from a technical afterthought to a core business function, leaders can move the needle to accelerate enterprise-wide transformation.

Resources:

- For information AIOps and Observability from Broadcom, visit broadcom.com/aiops

- Learn about DX Operational Observability here.

Sources:

1 - Why Software Is Eating The World, Wall Street Journal, 2011

2 - AI: BUILT TO SCALE, Accenture, 2019

3 - Tech Trends 2026, Deloitte, 2026